|

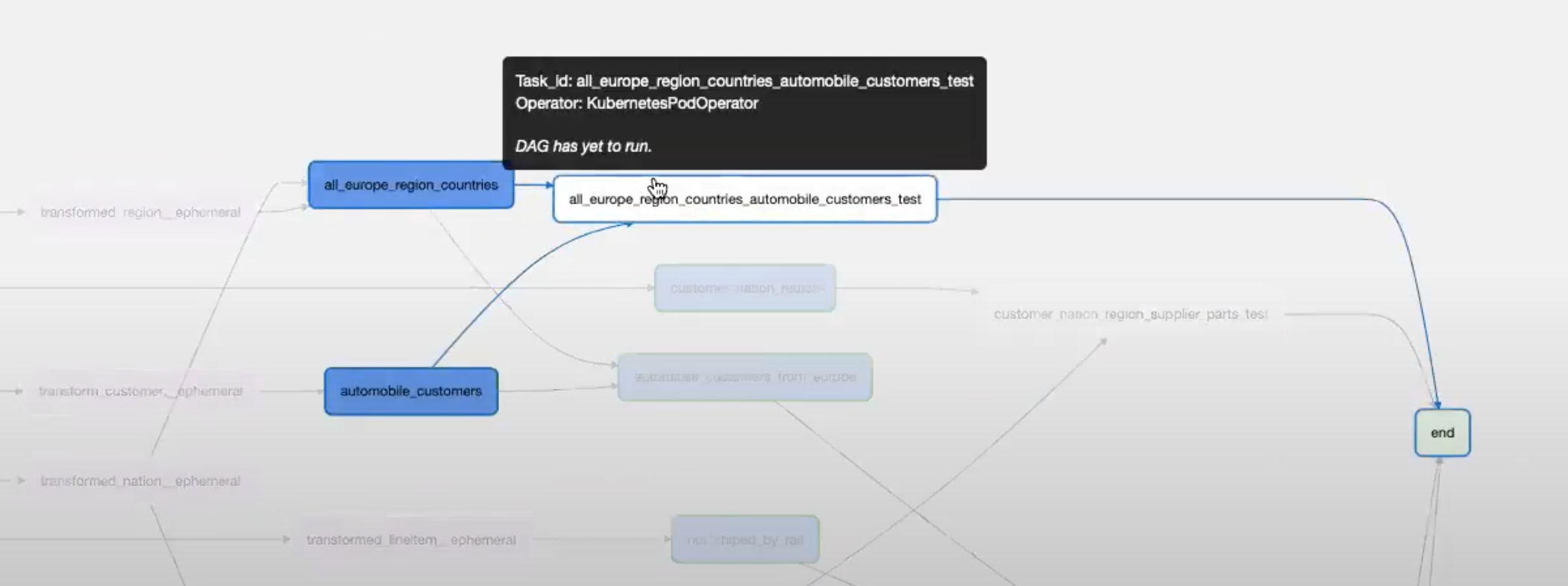

With that, we collaborated with John Lynch, Senior Data Engineer, and Flavien Bessede, Data Engineering Manager at Updater to turn the brilliant work they’ve done into this two-part series covering the various approaches, considered limitations, and final output of a team who has put endless thought into building a scalable data architecture at the intersection of dbt and Airflow. In chatting with a handful of Astronomer customers who have spent time exploring solutions at the intersection of Airflow and dbt, we discovered that our friends at Updater have built a particularly great experience for authoring, scheduling, and deploying dbt models to their Airflow environment. Given the complementary strengths of both tools, it’s common to see teams use Airflow to orchestrate and execute dbt models within the context of a broader ELT pipeline that runs on Airflow and exists as a DAG. dbt helps users write, organize, and run these in-warehouse transformations.

The portion of the modern data engineering workflow that dbt addresses is significant as ephemeral compute becomes more readily available in the data warehouse itself thanks to tools like Snowflake, data engineers have embraced an ETL->ELT paradigm shift that encourages loading raw data directly into the warehouse and doing transformations on top of the aforementioned ephemeral compute. A tool that often comes up in conversation is dbt, an open-source library for analytics engineering that helps users build interdependent SQL models for in-warehouse data transformation. Editor’s NoteĪt Astronomer, we’re often asked how to integrate Apache Airflow with specialized data tools that accommodate certain usage patterns. Note: All of the code in this post is available in this Github repository and can be run locally using the Astronomer CLI. Tried: /usr/local/ got error: Permission denied: ‘/usr/local/’Īny tip or advice would be really helpful.Streamline your data pipeline workflow and unleash your productivity, without the hassle of managing Airflow. Tried: /usr/local/airflow/dags/ got error: Read-only file system: ‘/usr/local/airflow/dags/’ Tried: /var/local/airlfow got error: Read-only file system: ‘target/partial_parse.msgpack’ I tried different strategies of disabling dbt logs but none of them have worked.ĭbt modules in my implementation are invoked using the bash operator and I am thus looking for a way to circumvent this I tried your advice and tried to write logs into dbt root directory with no success:Ĭmd: [‘bash’, ‘-c’, 'dbt -no-write-json run -profiles-dir /usr/local/airflow/dags/dbt/dbt_profiles -project-dir /usr/local/airflow/dags/dbt/product_health_edp_dbt I too am facing a similar problem when I try running astro airflow in my local environment. Hi and wanted to follow up to see if any of the above strategies worked for you. Read-only file system: 'logs/dbt.log'Ī way around this is to have your dbt root directory in the root astro folder. "Destination": "/usr/local/airflow/dags",

"Source": "/host_mnt/Users/alan/projects/astro/dags", dbt will attempt to create a directory in the dags directory.ĭue to the way the dags directory in your local deployment is mounted, Docker will not allow for any user in the container to write to a mounted directory.Īs you can see, the mounted directory is read only (“ro”). When you execute dbt run, it will try to create a logs directory in the dbt root directory. However, the local deployment, spun up by astro CLI, mounts the dags directory from your local machine to the containers. Usually, in a deployment that is not your local deployment started by astro CLI, this works. This is because your dbt project home is set in the dags directory.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed